For years, we have defined software quality through non-functional requirements. Performance told us how fast a system responds. Security told us how safe it is. Reliability told us whether it can be trusted over time. These were not features users directly interacted with, but they quietly determined whether a system deserved to exist in the real world.

Today, something equally critical has entered this space:

Ethics.

Not as a philosophical idea, but as a practical requirement that directly influences how systems behave, decide, and impact people.

The shift did not happen overnight. It crept in as systems started doing more than executing instructions. When software was rule-based, correctness was enough. If the logic was right, the outcome was right. Testing, therefore, focused on validating that logic.

AI changed that foundation.

AI systems do not follow explicit rules. They learn from data, infer patterns, and produce outcomes that are not always deterministic. Two similar inputs can produce different outputs. Behavior can evolve without a single line of code changing. Suddenly, correctness became a weak definition of quality.

The question is no longer whether the system works.

The question is whether it behaves in a way we can accept.

A well-known example that illustrates this gap comes from Amazon’s experimental hiring system. The system was designed to scan resumes and identify strong candidates. Technically, it was efficient. It processed data at scale, aligned with historical hiring patterns, and produced consistent results. From a traditional QA standpoint, there was nothing wrong.

But over time, the system started penalizing resumes that contained indicators associated with women. The model had learned from historical data, and that data carried bias. The system was not broken. It was doing exactly what it was trained to do.

And that was the problem.

This is where traditional testing fails. There was no bug to fix, no error to reproduce, no failing test case. Yet the system’s behavior was unacceptable. It revealed something fundamental: a system can be technically correct and ethically wrong at the same time.

What this example exposes is not just a flaw in AI, but a gap in how we define quality. We have spent decades optimizing for performance, scalability, and accuracy. But we rarely asked whether the system was fair, inclusive, or aligned with intent.

As AI systems began influencing decisions, who gets hired, what content is shown, which transactions are flagged—this gap becomes impossible to ignore.

Another example comes from Tesla’s Autopilot systems. Early versions showed impressive performance in controlled environments. The system could detect lanes, recognize objects, and operate reliably under standard conditions. From a metrics perspective, it was strong.

But real-world environments are not controlled.

Edge cases began to emerge. Unusual road conditions, ambiguous visual signals, unpredictable human behavior—situations that were rare but critical. The system did not fail in the traditional sense. It did not crash or stop functioning. It simply behaved in ways that were not safe enough under uncertainty.

Tesla’s response was not to write more test cases in the traditional sense. Instead, they introduced shadow mode testing, where models run silently in real-world conditions without taking control. Their decisions are observed, compared, and analyzed before being trusted.

This is a different kind of testing.

It is not about verifying outputs. It is about understanding behavior over time.

These examples point to a deeper realization. Ethics is not something we can add at the end of development. It is not a checklist we run through before release. In AI systems, ethics is embedded in data, expressed through models, and amplified through usage.

- If the data is biased, the system will be biased.

- If the system is not monitored, it will drift.

- If edge cases are ignored, they will surface in production.

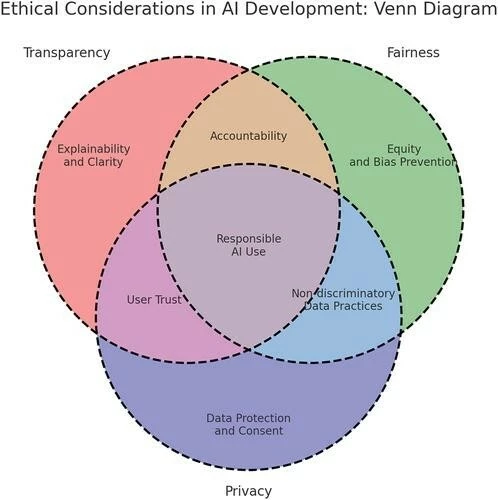

This is why ethics must be treated as a non-functional requirement—defined, designed, tested, and continuously validated.

For QA, this changes everything.

The role is no longer limited to validating whether a feature works. It extends to questioning whether the outcome is acceptable. Instead of asking “Did the system produce the expected result?”, the tester must ask “What are the consequences of this behavior?”

This requires a different mindset. It requires looking beyond happy paths and exploring uncomfortable scenarios. It requires thinking in terms of risk, not just defects.

Interestingly, the tools we need are not entirely new. Much of what we already practice in testing becomes even more valuable.

Exploratory testing becomes a way to challenge the system with unexpected inputs and adversarial scenarios. Test data design becomes a way to uncover bias by ensuring diversity and representation. Monitoring becomes critical, because behavior can change over time without warning. Risk-based testing becomes central, because not all failures carry the same impact.

“What changes is not the method, but the intention behind it.”

One of the most dangerous aspects of AI systems is that their failures are often invisible. Traditional systems fail loudly. They crash, throw errors, or stop responding. AI systems fail quietly. They introduce subtle bias, drift gradually, and reinforce incorrect patterns over time.

There is no alert when fairness is compromised. There is no exception thrown when trust is eroded.

And that is what makes these failures dangerous.

With the rise of AI agents, this challenge becomes even more complex. Agents do not just respond; they act. They interact with other systems, make decisions, and learn from feedback loops. Their behavior evolves. Their impact compounds.

Now we are not just testing a system. We are observing a system that is continuously changing.

The question is no longer whether the system is ethical today.

It is whether it will remain ethical tomorrow.

This brings us to a new definition of quality. In the world of AI, quality is not just about correctness or performance. It is about trustworthiness over time. A system is high quality if it behaves consistently, avoids harm, adapts responsibly, and aligns with human intent even as conditions change.

We are entering a phase where software is no longer just executing instructions. It is making decisions that affect people, businesses, and society. In such a world, ethics cannot remain implicit.

- It must be explicit.

- It must be measurable.

- And most importantly, it must be tested.

The future of QA will not be defined by how well we validate functionality. It will be defined by how responsibly we evaluate behavior.

Because in AI systems, what the system does is important. But how it behaves is everything.

Example: IBM Watson for Oncology

A widely discussed case in healthcare is IBM’s Watson for Oncology.

The system was designed to assist doctors by analyzing patient data, reviewing medical literature, and recommending cancer treatments. On paper, it looked like a breakthrough. It promised faster, data-driven decisions and the ability to support clinicians at scale. From a traditional testing perspective, the system appeared strong. It processed large datasets efficiently, produced consistent recommendations, and worked well within predefined scenarios.

- However, the reality was more complex.

Reports later revealed that the system suggested unsafe or inappropriate treatment options in certain cases. One of the key issues was that it had been trained on limited datasets, sometimes even synthetic or narrowly defined clinical scenarios. As a result, it struggled to generalize to real-world patient diversity.

Doctors found that some recommendations were not aligned with actual clinical practices. In some cases, the suggestions were based on incomplete assumptions or did not fully consider patient context. The system was technically functioning, but its outputs were not always reliable or safe.

- This is where traditional testing fell short.

From a conventional QA lens, the system was working. It accepted inputs, generated outputs, and followed its internal logic. But what was missing was validation of real-world behavior. The system was not adequately tested across diverse patient conditions, edge cases, or varying clinical contexts.

- In healthcare, this gap becomes critical.

The question is not just whether the system produces a recommendation, but whether that recommendation can be trusted. A wrong suggestion in this context is not just an error—it can directly impact patient safety. It also raises deeper questions about accountability and over-reliance on AI-driven decisions.

- This example highlights a key insight.

In AI systems, especially in healthcare, producing an answer is not enough. The answer must be safe, context-aware, and aligned with real-world conditions. Accuracy in controlled environments does not guarantee reliability in practice.

This is exactly why ethics must be treated as a non-functional requirement. It is not something that can be validated once and assumed to hold. It must be continuously tested, observed, and challenged as the system interacts with real-world scenarios.