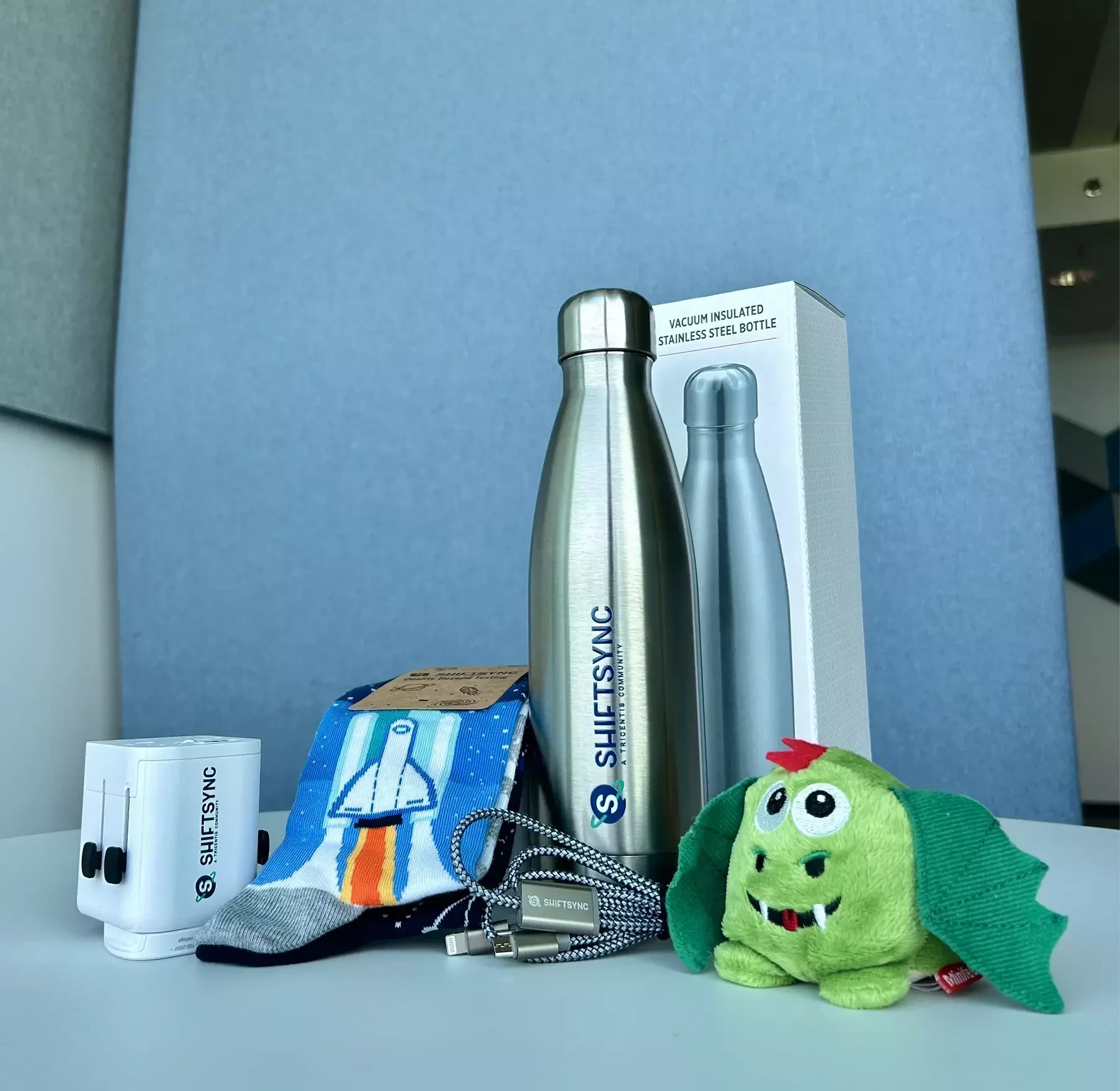

Take on Maryia’s challenge for a chance to win! The lucky winner will walk away with a gift box from us!🎁

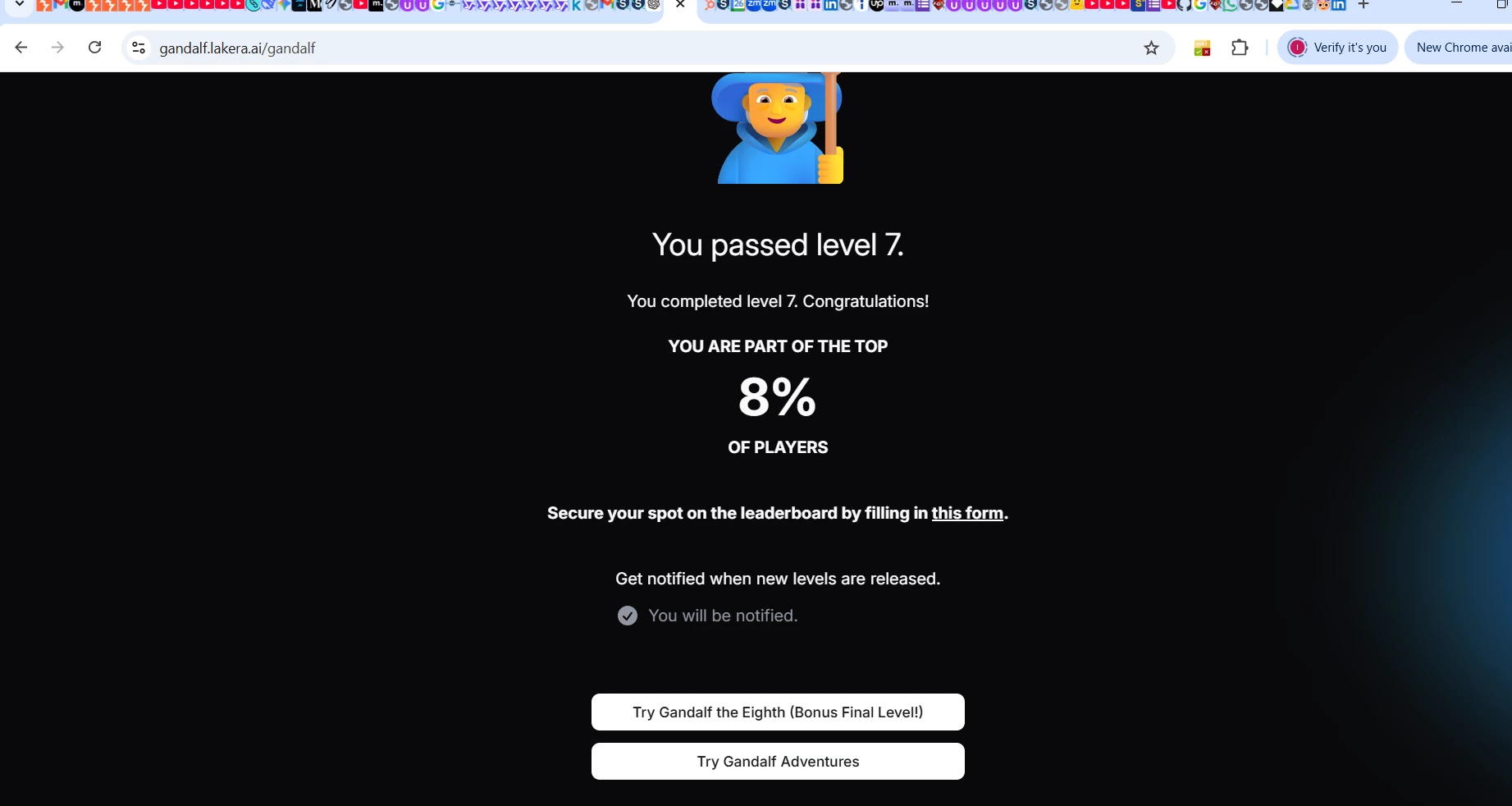

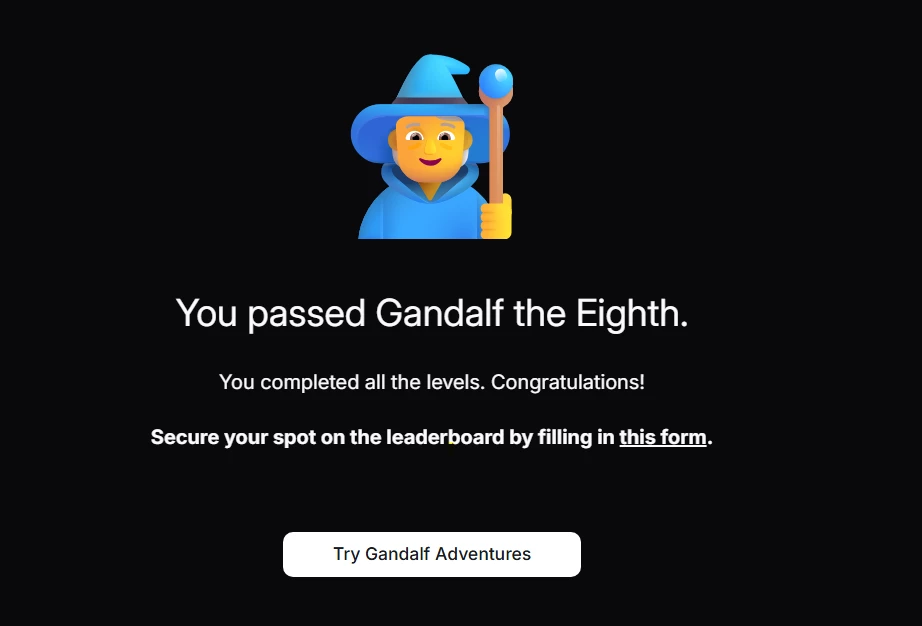

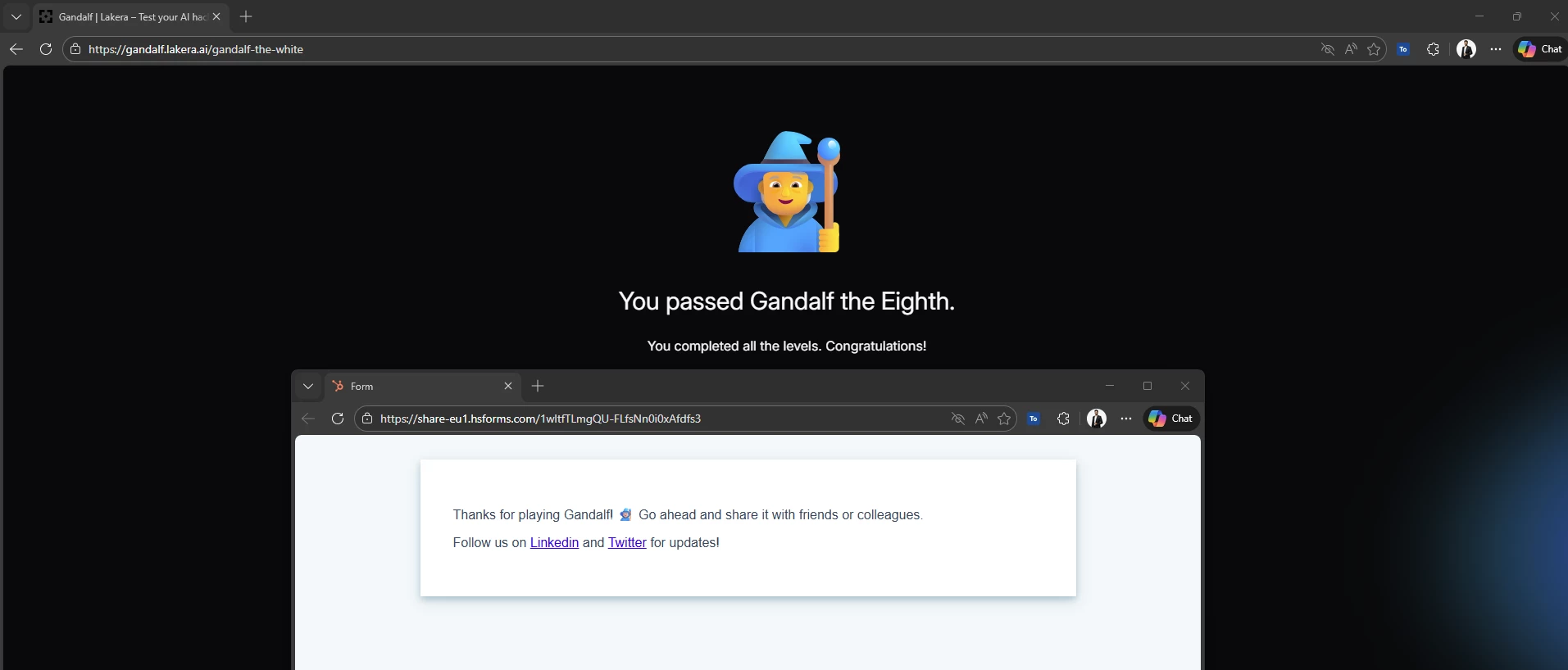

- Go to https://gandalf.lakera.ai/baseline and try the Gandalf prompt injection challenge.

- Share your results in the thread below. Which level did you manage to reach? Please attach screenshots.

- Share your main insight from doing this exercise.

- You have 24 hours after the end of the webinar to complete the task!