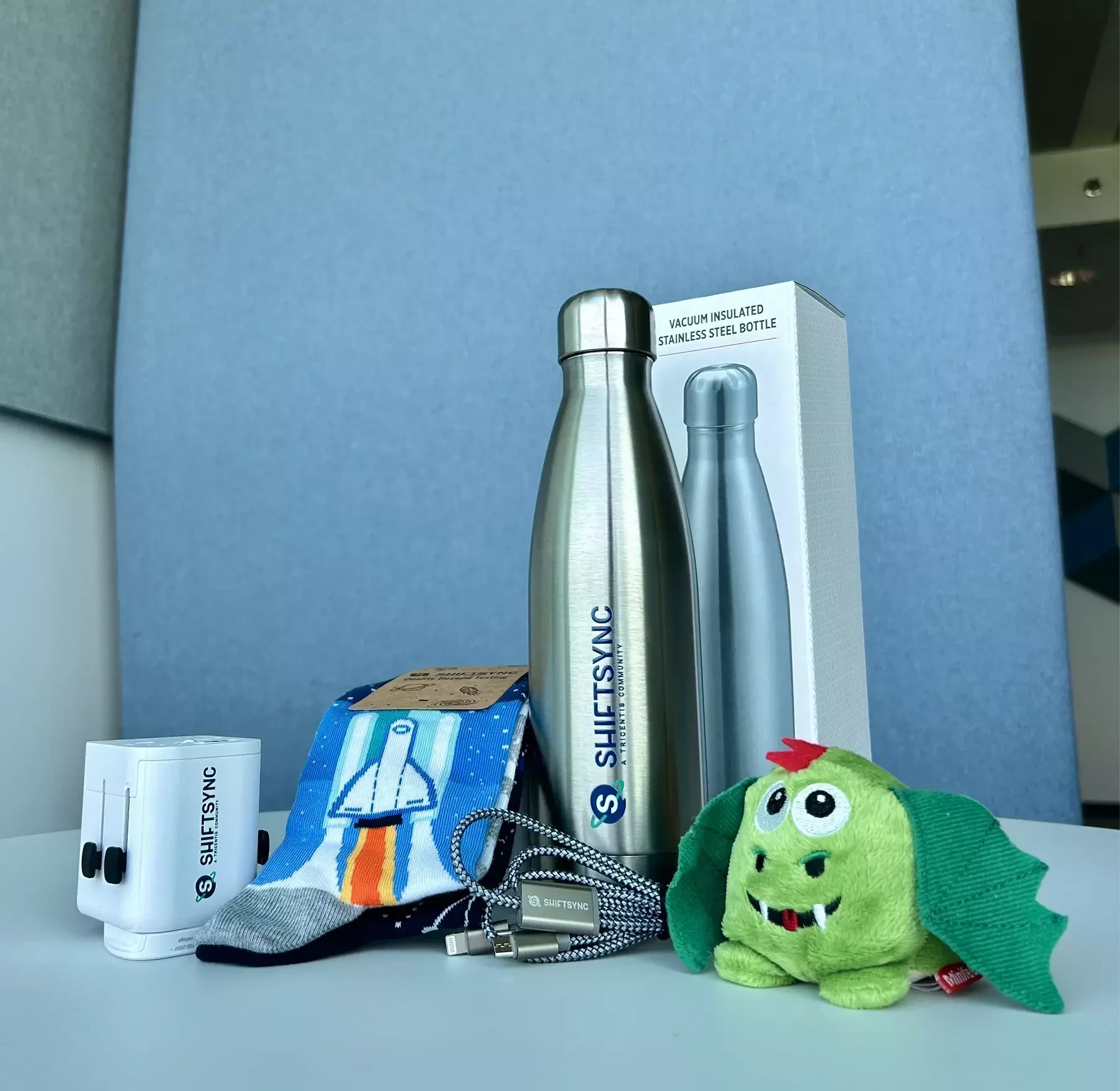

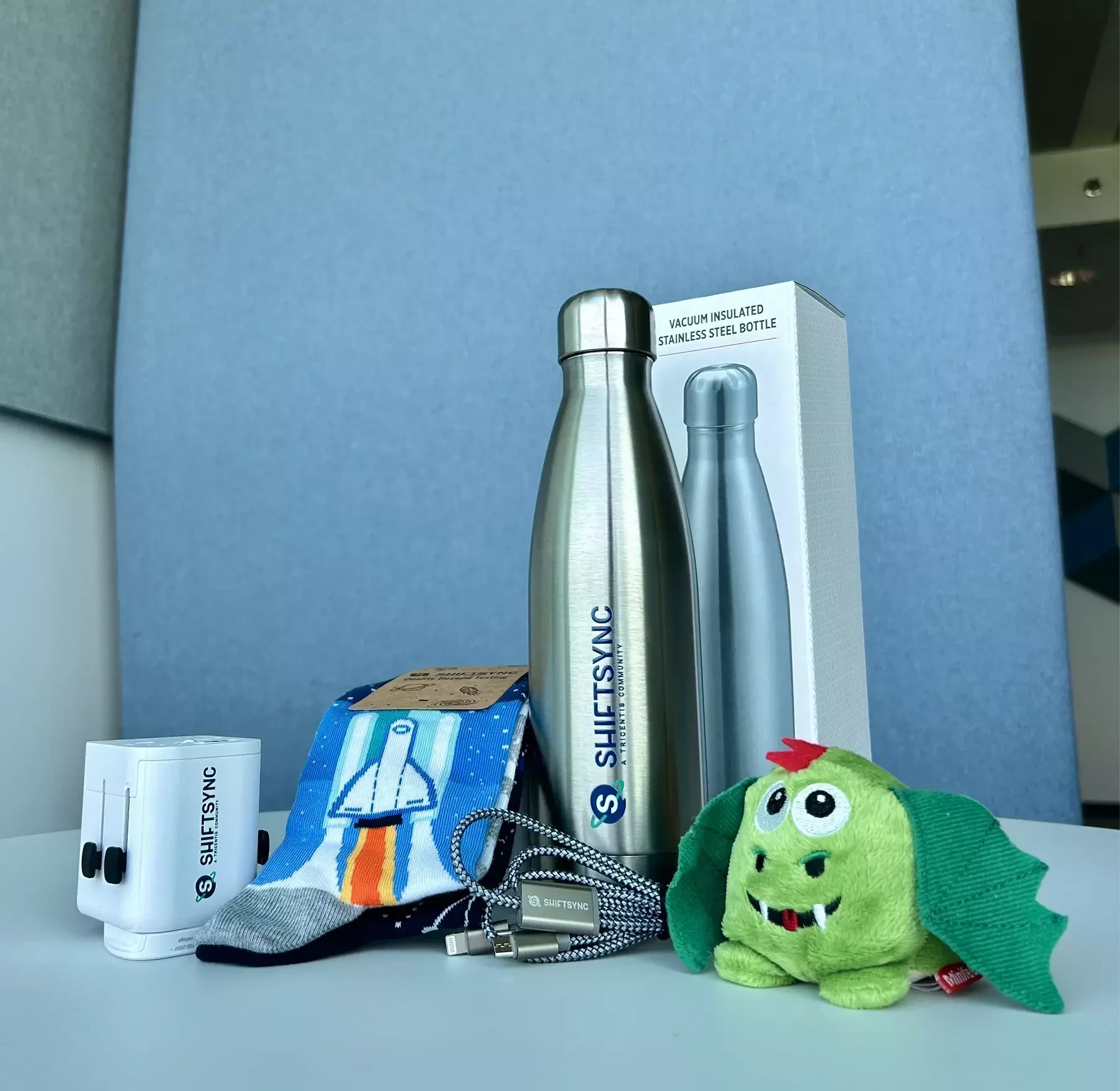

Take on Filip’s challenge for a chance to win! The lucky winner will walk away with a gift box from us!🎁

Non-determinism — AI outputs vary across runs, making traditional assertion-based testing brittle. "Correct" is often subjective or probabilistic, requiring statistical validation and LLM-as-judge approaches.

Observability & explainability — When a model produces a wrong answer, tracing why is hard. There's no stack trace for a bad inference, making root cause analysis and regression prevention fundamentally different from classical software.

Data & prompt drift — Models degrade silently as real-world data distributions shift or prompts change. Continuous quality monitoring (evals, golden datasets, shadow testing) must replace one-time release testing.

1.Data Quality & Bias

AI systems are only as good as the data they learn from.

Why it’s a challenge:

Example:

A hiring AI trained on historical data may unintentionally favor certain profiles if past hiring was biased.

2.Explainability & Transparency (Black Box Problem)

Many AI models (especially deep learning) don’t clearly explain why they made a decision.

Why it’s a challenge:

3.

Dynamic Behavior & Continuous Learning

Unlike traditional software, AI systems evolve over time.

Why it’s a challenge:

Example:

A recommendation engine today may behave differently next week after retraining.

1️⃣ AI systems built on Machine Learning aren’t fully predictable, so testing shifts from checking exact results to evaluating reliability and behavior patterns.

2️⃣ Data quality and model drift can silently reduce performance over time, making continuous monitoring essential.

3️⃣ Systems powered by Generative AI introduce new risks around trust, safety, and explainability that QA must actively manage.

The three biggest challenges for quality in the AI era are:

1. Believable mistakes AI doesn’t fail loudly – it fails convincingly. It can give answers that sound right but aren’t, making it harder to detect issues and easier to trust the wrong output.

2. Constantly changing behavior Unlike traditional systems, AI isn’t stable. Small changes in data, prompts, or models can shift results, so quality isn’t a one-time check – it's something that needs continuous monitoring.

3. Blurred accountability When AI goes wrong, it’s unclear who’s responsible – the model, the data, or the developer. This makes ensuring fairness, reliability, and trust much more complex.

Quality in AI is no longer just about correctness—it’s about trust, adaptability, and responsibility.

+2

+2Hallucinations and Accuracy: AI models often generate information that is factually incorrect, nonsensical, or completely fabricated, making rigorous verification essential.

Bias and Fairness: Models can inherit and amplify biases present in their training data, leading to unfair or discriminatory outcomes that must be actively identified and mitigated.

Lack of Explainability (The "Black Box"): The decision making process of complex AI is often opaque, making it difficult to understand why an error occurred or to trust the output in high stakes scenarios.

1. We're moving fast, but thinking slow

Yes, AI speeds up our work. But, AI also turns off our brains. That is not good while testing.

2. More tests, less confidence

Yes, AI creates 100 tests in seconds. But, is this the correct set of 100 tests?

3. Nobody owns the bug anymore

Yes, AI created the bug, AI tested it, AI passed it. But, if it fails, then who is responsible?…...

From a practical QA perspective, three challenges I see are:

1.AI can create a false sense of coverage by generating many tests without ensuring meaningful validation.

2. It can amplify flakiness in already unstable systems, making failures harder to debug.

3. And over-reliance on AI risks reducing deep product understanding, which is critical for identifying real quality gaps

In the AI era, quality is no longer just about validating functionality — it’s about ensuring trust in systems that are inherently unpredictable.

First, AI systems are non-deterministic, so the same input can produce different outputs. This makes traditional “expected vs actual” validation insufficient, requiring us to define acceptable response boundaries instead.

Second, there’s the challenge of correctness vs plausibility — AI can generate outputs that sound convincing but are factually wrong, making validation much harder than in deterministic systems.

Third, the focus is shifting from just testing outputs to controlling behavior. Techniques like context engineering and structured system design are becoming essential to reduce unpredictability and ensure consistent, reliable results.

Ultimately, the biggest challenge is moving from testing features to building systems that are trustworthy at scale.

3 biggest challenges for quality in AI era can be following:

1.> Data Integrity: What kind of data is fed to models and how much they are trained, it becomes difficult to verify and validate what’s given to the model.

2.> Biased Output: Output can’t be trusted because machine has produced result on the basis of what’s fed to it.So, it’s become difficult to validate output.

3.> Accountability & Transparency: Quality comes into a questionable state because, you need to work on a large data at once and if something fails it becomes difficult to pinpoint single use case and accountability and transparency comes into questionable form.

In the AI era, quality is no longer just about validating functionality — it’s about ensuring trust in systems that are inherently unpredictable.

First, AI systems are non-deterministic, so the same input can produce different outputs. This makes traditional “expected vs actual” validation insufficient, requiring us to define acceptable response boundaries instead.

Second, there’s the challenge of correctness vs plausibility — AI can generate outputs that sound convincing but are factually wrong, making validation much harder than in deterministic systems.

Third, the focus is shifting from just testing outputs to controlling behavior. Techniques like context engineering and structured system design are becoming essential to reduce unpredictability and ensure consistent, reliable results.

Ultimately, the biggest challenge is moving from testing features to building systems that are trustworthy at scale.

You need someone who can test intelligence, behavior and unpredictability.

Practically, IMHO

- AI systems don’t produce consistent outputs for the same input, which makes traditional assertion-based testing ineffective. I approach this by focusing on intent-based validation and semantic checks instead of exact matches.

- There’s often no single correct answer in AI systems, making it difficult to define pass/fail criteria. I address this by using multi-dimensional evaluation looking at correctness, relevance, and factual accuracy along with human-in-the-loop validation where needed.

-System performance is heavily dependent on data, and it can degrade over time as data changes. I treat data as a first-class test artifact by versioning datasets, prompts, and models, and by continuously monitoring for drift and quality drops.

Three Challenges i see

1. Unpredictable Outputs

AI can give different answers for the same input, making testing and debugging difficult.

2. Hard to Measure Quality

There’s no single “correct” answer—quality depends on accuracy, relevance, and context.

3. Trust & Safety Issues

AI can be wrong, biased, or unsafe, so ensuring reliable and ethical behavior is a big challenge.

1) Data Quality & Bias

incomplete data leads to unreliable outputs

Biased datasets amplify unfair or discriminatory results

Outdated data produces irrelevant or incorrect insights

2) Consistency & Reliability at Scale

Same prompt slightly different outputs

Edge cases can produce errors or hallucinations

Performance may degrade over time

3) Explainability

3 biggest Quality Challenges in AI Era

1. AI hallucination and drifts

It gets frustrating at times to get the model respond to the prompts and get the expected answer.

2. Training for Quality Engineers on Context Engineers

Proper training needed to Quality Engineers on good practices on prompting and building skills.

Absence of this, can bloat context, increase token usage and increase costs

3. Response is nondeterministic . I find it difficult to telling a prompt is accurate, what is the precision,what metrics can be used to assessa prompt.

The three biggest challenges for quality in the AI era are ensuring fairness and eliminating bias, maintaining transparency and explainability, and safeguarding compliance and ethical standards. These issues directly affect trust, adoption, and the long-term sustainability of AI systems.

No account yet? Create an account

Enter your E-mail address. We'll send you an e-mail with instructions to reset your password.

Sorry, we're still checking this file's contents to make sure it's safe to download. Please try again in a few minutes.

OKSorry, our virus scanner detected that this file isn't safe to download.

OK